In my last post (October 23rd), I began summarizing an EE Times Special Project on growing concerns over technology reliability and safety. In that post, I looked at the sections of the report that focused on a couple of the recent well-publicized problem areas: hoverboards and Boeing 737s. If we just pay attention to the problems, it can be overwhelming. Fortunately, the report also provides some suggestions for addressing this critical issue.

George Leopold (principal author on the project report) writes about the “hard-learned lessons” that led NASA to become more reliability conscious. His focus is on the Apollo missions, noting the disastrous Apollo 1  launchpad fire (January 1967), which killed three astronauts. The fire was attributed to NASA having traded off safety and reliability in order to meet an aggressive schedule.

launchpad fire (January 1967), which killed three astronauts. The fire was attributed to NASA having traded off safety and reliability in order to meet an aggressive schedule.

Among the causes was “Go Fever,” a group-think mentality that blinded otherwise brilliant aerospace engineers from seeing problems right in front of their noses.

The good that came out of Apollo 1 was a new commitment to safety. One of the prime areas was making sure that all components of the Apollo Saturn V rocket – which powered the first manned moon landing in July 1969 – were redundant, and in many instances had multiple redundancy. Safety and reliability also became imperatives of the design process. Finally, NASA engineers began doing more testing, always keeping in mind that three men had lost their lives on Apollo 1. No one wanted a repeat of that.

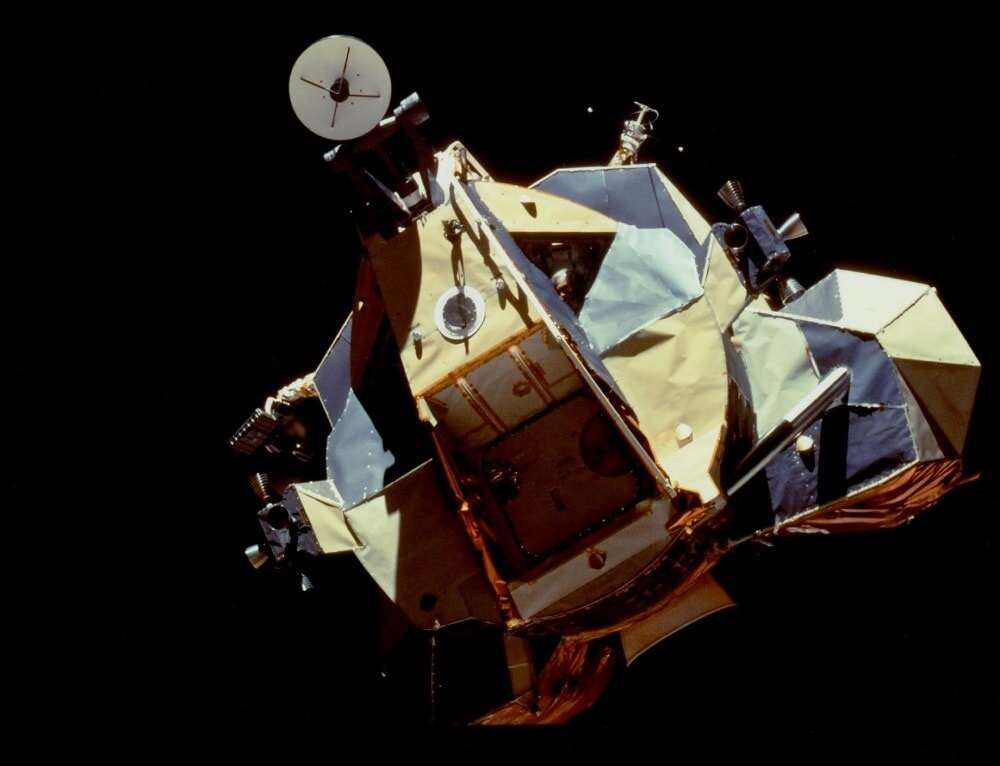

Project Apollo rose from the ashes of a disaster, and great space-faring machines were built that took 24 humans to the moon.

The NASA example is, of course, interesting, especially given that we recently celebrated the 50th anniversary of the first moon landing. It would have been more interesting if this section of the report had included analysis of what went wrong with the Space Shuttle Challenger…

In his chapter of the report, Design for reliability: You have the tools, Martin Rowe gives us more technically detailed (and to me more absorbing) look at what engineers need to do to create reliable systems.

He’s a big proponent of bringing in reliability engineers early on:

Reliability engineers look for and analyze possible failure conditions (modes) to determine each failure’s severity and probability of occurrence. “Bring in reliability people at the start,” said Ken Rispoli, who recently retired as Sr. Principal Engineer at Raytheon Integrated Defense Systems. “They can point to problems that designers might overlook.”

It’s necessary to “define the failure”.

Failure analysis comes down to risk. How much risk of failure is acceptable depends on the product’s intended use cases and the consequences of the failure. If lives or large sums of money are at stake, then you need to minimize risk to the greatest extent possible. Risk also depends on the expected lifetime of a product.

When it comes to reliability planning and analysis, engineers have the following in their tool kit:

- Stress analysis

- Mean time between failure (MTBF)/mean time to failure (MTTF)

- Failure mode and effects analysis (FMEA)/Failure mode, effects and criticality analysis (FMECA)

- Worst-case analysis

Rowe goes into a bit of detail here, and provides some good examples. Make sure you check out his full article .

At Critical Link, where we’re typically working with applications that really matter, and we’re proud of our commitment to reliability and safety. It’s always good to be reminded about why reliability and safety are so important, and that there’s a lot we can do about it.

This is the second in a two-part series based on the EE Times/Aspensource Special Project on technology reliability and safety. You can find the first part here.