Since the pandemic hit our shores earlier this year, we’ve been keeping our eye out for ways in which the electronics industry has responded. Several of our earlier posts have addressed this. In Tech Takes on the Coronavirus, I wrote about two Italian innovators who’d come up with a way to use 3D printers to create valves needed for ventilators. Oura Rings Enlisted in the Fight Against COVID talked about this technology, which the NBA planned to use to keep players in their bubble safe. Most of us have gotten used to social distancing, and in this post I took at look at some applications that enable social distancing in a retail setting.

EDN shares our interest in, and they recently asked a provocative questions: “Has the electronics industry done enough tackling the pandemic?”

To answer this and other questions, EDN/AspenCore pulled together a series on “real design solutions related to diagnostics, treatment, surveillance and prevention of the spread of the novel coronavirus,” which I’ve been reading on EE Times.

In this post, I’m providing a very brief summary of what’s in first article in their series. (The remaining articles will be fodder for future posts.)

Majeed Ahmad offered a “sneak peek into the brand-new design ecosystem built around the fight against the coronavirus pandemic.” He began with automated ways to detect and maintain social distancing, and the role that photoelectric proximity sensors, combined with miniature programmable logic controllers, is playing. The sensors determine which way the traffic is flowing, and the PLCs counts the number of people coming and going and provides red light-green light data.

The next element of the coronavirus design ecosystem he introduces is COVID testing via camera. Using thermal imaging cameras to take someone’s body temperatures is a fast, efficient, and contact-free way to figure out who’s running a fever. An alarm is triggered, and the person with an elevated temperature can be taken aside for further screening.

A third area focuses on pre-diagnostic screening, and I found this one particularly interesting. The one Majeed writes about is predicated on analyzing samples of coughing sounds to “identify unique cough patterns associated with COVID-19 infections.”

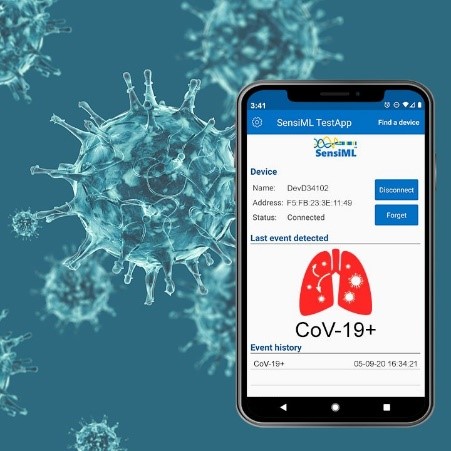

SensiML, a subsidiary of QuickLogic, which develops software solutions for ultra-low-power IoT end-points, is crowdsourcing the cough samples from healthcare facilities to create large datasets. Next, it’s

going to apply the AI-based cough analysis algorithms to these datasets to build an efficient COVID-19 screening mechanism.

Given that we all get a bit panicked when someone near us starts coughing – even if they’re wearing a mask and six-feet away – it will be great if someone could figure out how to detect a possible COVID infection as opposed to a tickle in the throat.

The final part of the ecosystem Majeed sees emerging is wireless patches for contact tracing. These wearables would be equipped with temperature sensing chips. “The low-cost and no-touch temperature sensing tags can remotely sense body temperature and ensure that people with symptoms—having placed the patch on the skin—can stay at home.”

So far, all the articles I’ve read in this series have been worth the read. The electronics industry isn’t going to cure COVID-19, but it’s certainly going to help us work our way through the pandemic while we await a viable vaccine. And it will help us be better prepared to respond to anything that happens in the future.

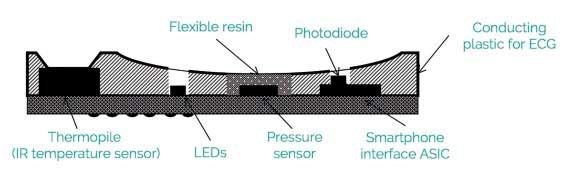

thermopile measures heat radiation to estimate body temperature. The application-specific integrated circuit designed by LMD controls the LEDs; captures and digitizes data from the photodiode, pressure sensor, and thermopile; and communicates with the mobile phone processor. (Source:

thermopile measures heat radiation to estimate body temperature. The application-specific integrated circuit designed by LMD controls the LEDs; captures and digitizes data from the photodiode, pressure sensor, and thermopile; and communicates with the mobile phone processor. (Source: